@[TOC]

环境:CentOS6.7、Hadoop2.7.3、HBase1.2.5、Zookeeper3.4.6

安装Flume1.7

以root用户登录

进入home目录

下载flume1.7到本地

1

| wget http://archive.apache.org/dist/flume/1.7.0/apache-flume-1.7.0-bin.tar.gz

|

解压

1

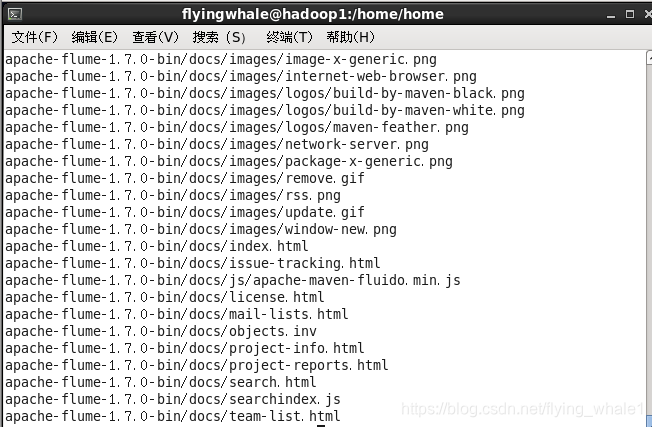

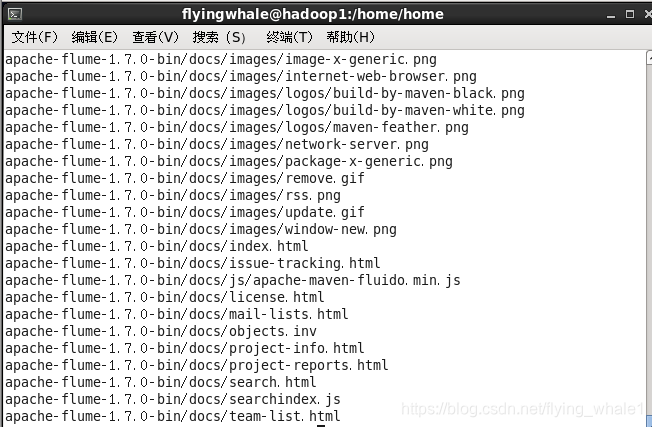

| tar xvf apache-flume-1.7.0-bin.tar.gz

|

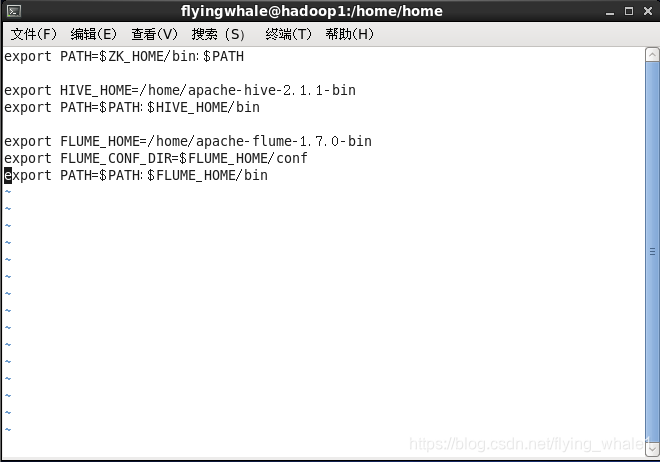

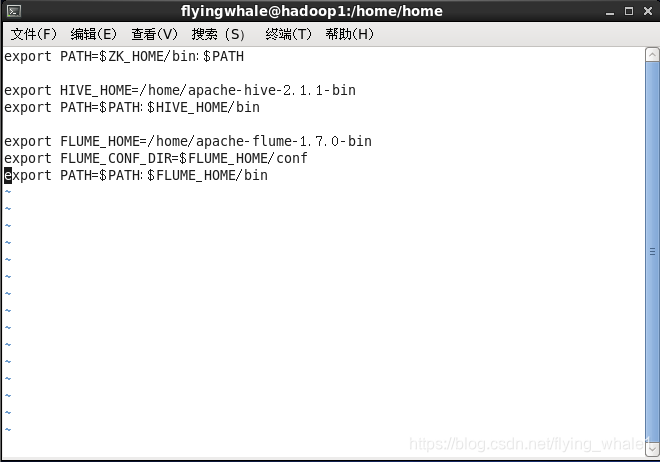

配置环境变量

文件末尾添加

1

2

3

| export FLUME_HOME=/home/apache-flume-1.7.0-bin

export FLUME_CONF_DIR=$FLUME_HOME/conf

export PATH=$PATH:$FLUME_HOME/bin

|

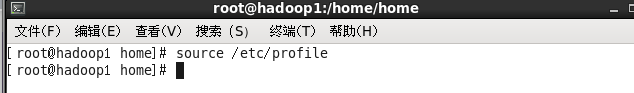

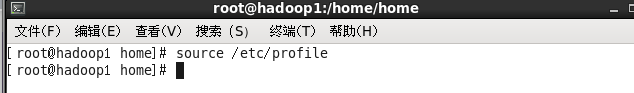

使环境变量生效

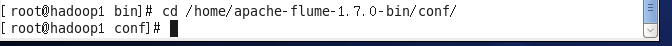

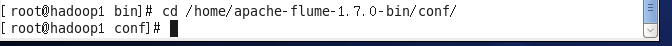

进入Flume的配置目录

1

| cd /home/apache-flume-1.7.0-bin/conf/

|

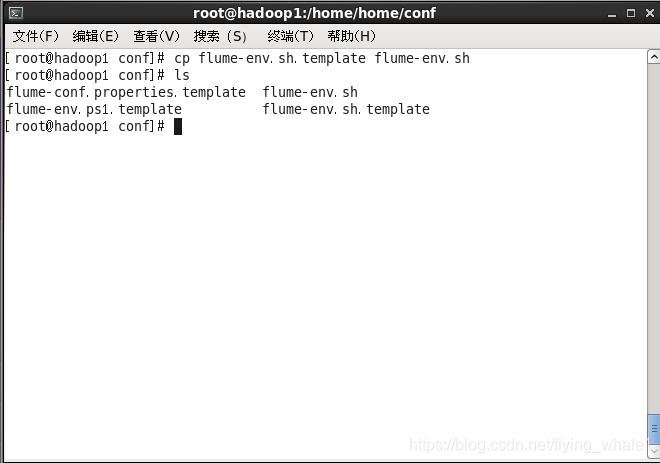

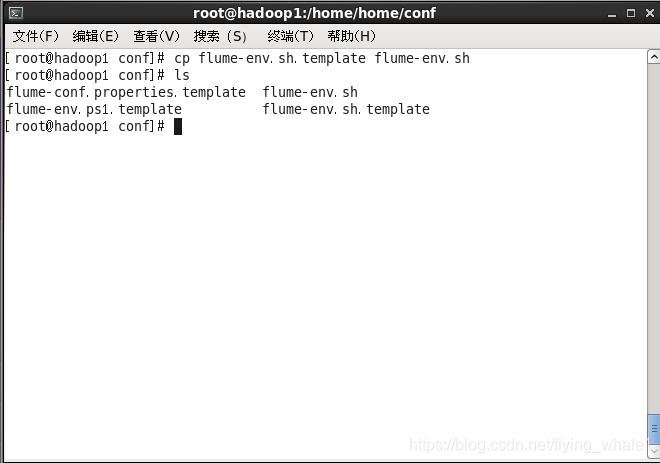

拷贝flume-env.sh的模板文件

1

| cp flume-env.sh.template flume-env.sh

|

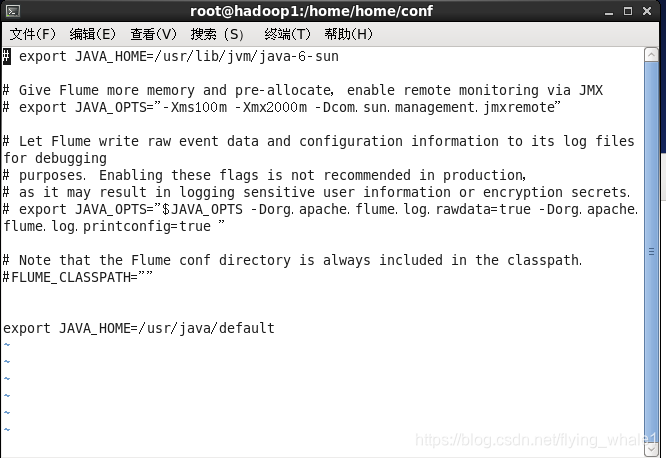

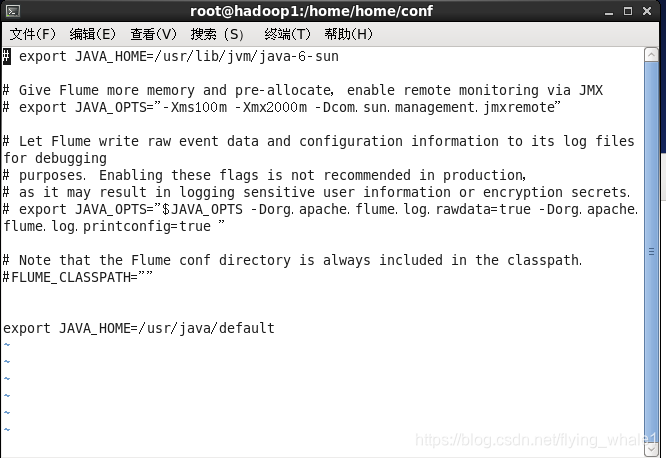

配置flume-env.sh文件

末尾添加

1

2

| #若是解压安装的jdk,需设置为解压的安装目录

export JAVA_HOME=/usr/java/default

|

使环境配置生效

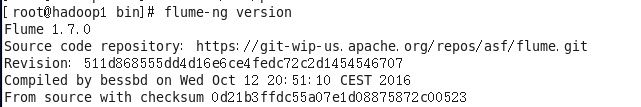

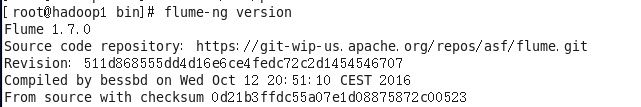

验证安装Flume成功

Flume使用

使用Flume接收AvroSource信息

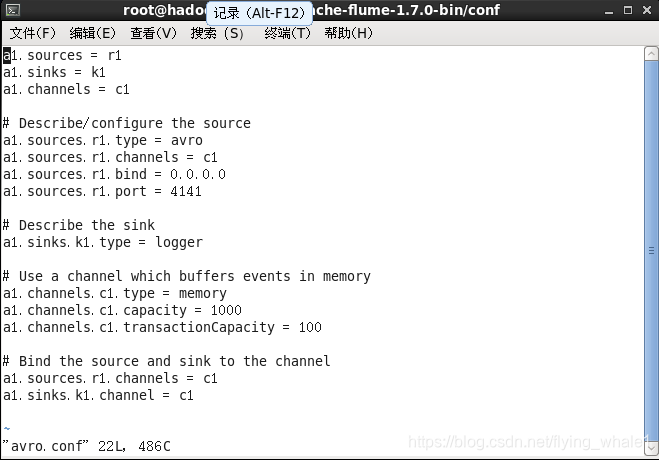

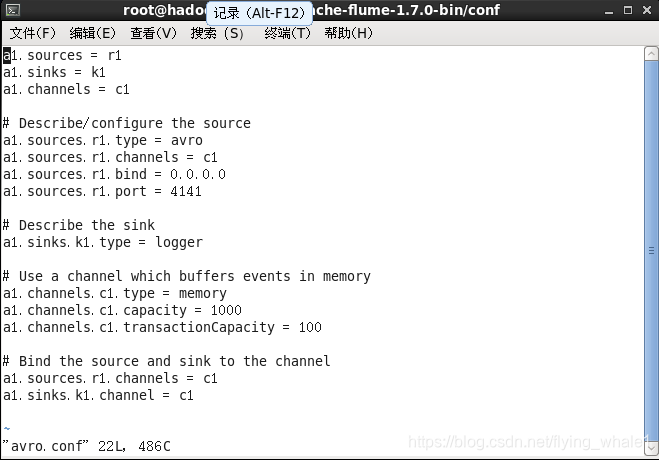

创建Agent配置文件

1

2

| cd /home/apache-flume-1.7.0-bin/conf/

vi avro.conf

|

文件末尾添加

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

| a1.sources = r1

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

a1.sources.r1.type = avro

a1.sources.r1.channels = c1

a1.sources.r1.bind = 0.0.0.0

a1.sources.r1.port = 4141

# Describe the sink

a1.sinks.k1.type = logger

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

|

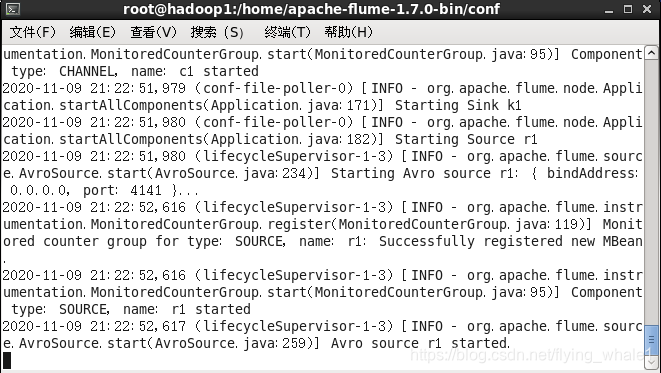

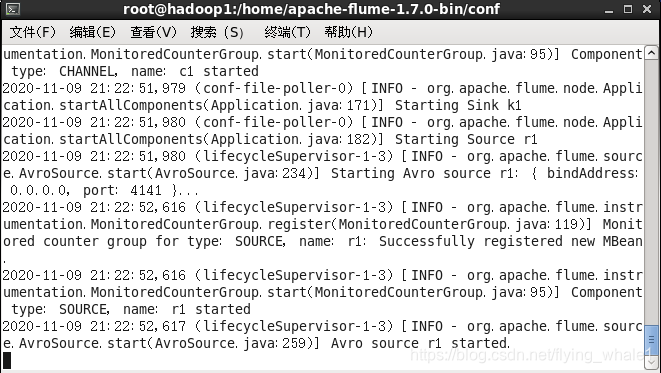

启动日志控制台

1

| flume-ng agent -c . -f avro.conf -n a1 -Dflume.root.logger=INFO,console

|

按Ctrl+C可退出日志控制台,暂时先不退出

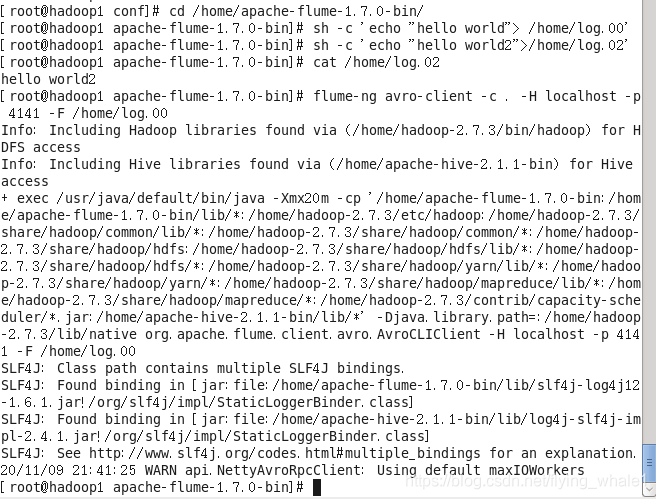

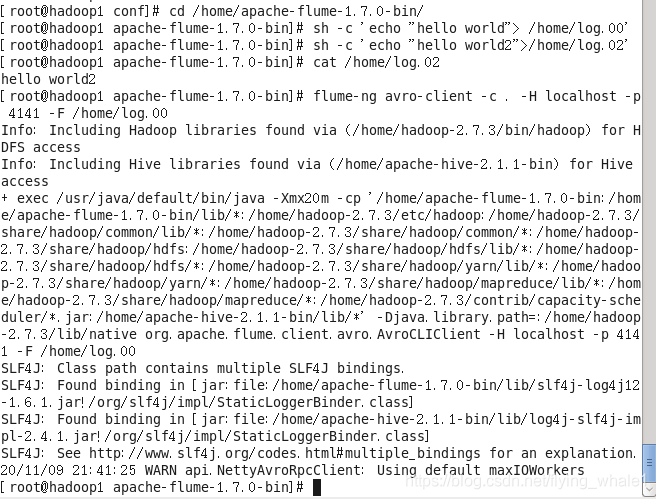

新建一个命令窗口,创建包含hello world的log文件并发送给Flume

1

2

3

| cd /home/apache-flume-1.7.0-bin/

sh -c 'echo "hello world"> /home/log.00'

flume-ng avro-client -c . -H localhost -p 4141 -F /home/log.00

|

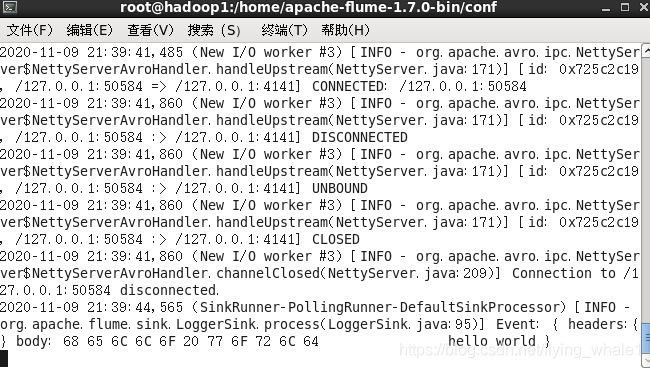

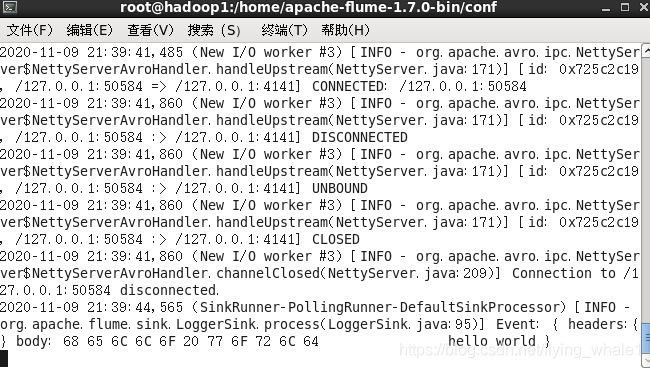

切回日志控制台的命令窗口接收到消息

使用Flume接收NetcatSource信息

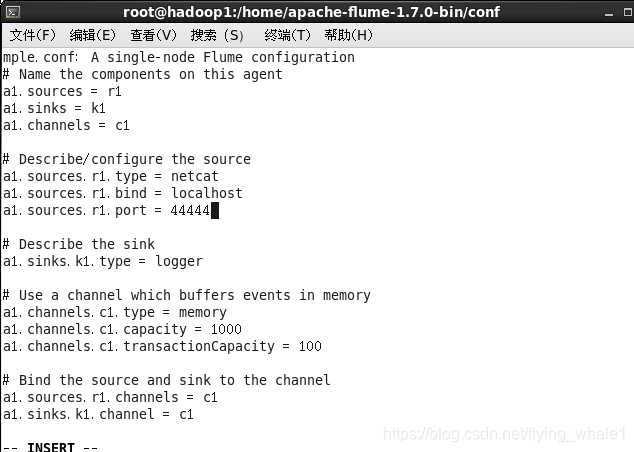

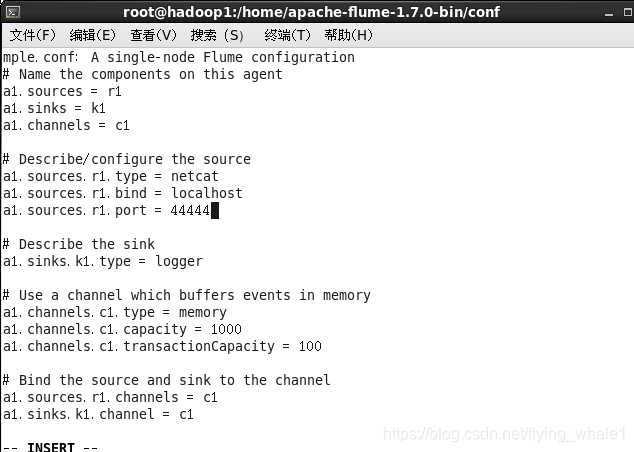

创建agent配置文件

1

2

| cd /home/apache-flume-1.7.0-bin/conf/

vi example.conf

|

在文件末尾添加

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

| #example.conf: A single-node Flume configuration

# Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

a1.sources.r1.type = netcat

a1.sources.r1.bind = localhost

a1.sources.r1.port = 44444

# Describe the sink

a1.sinks.k1.type = logger

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

|

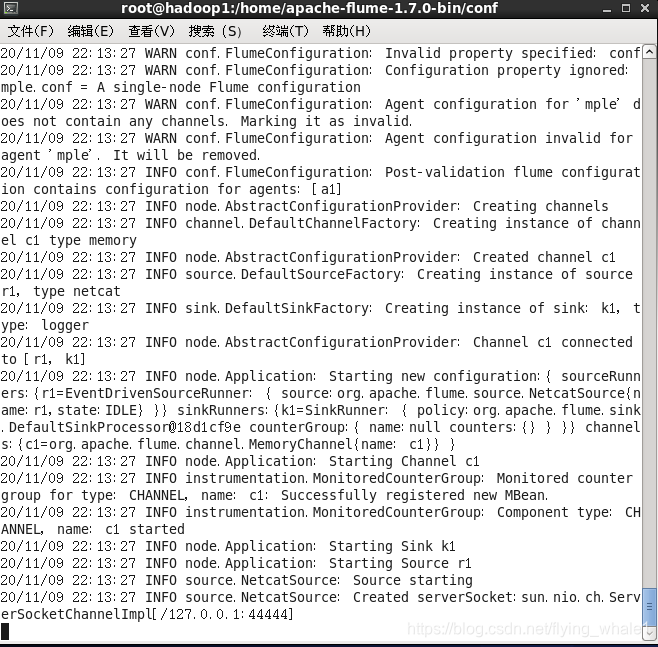

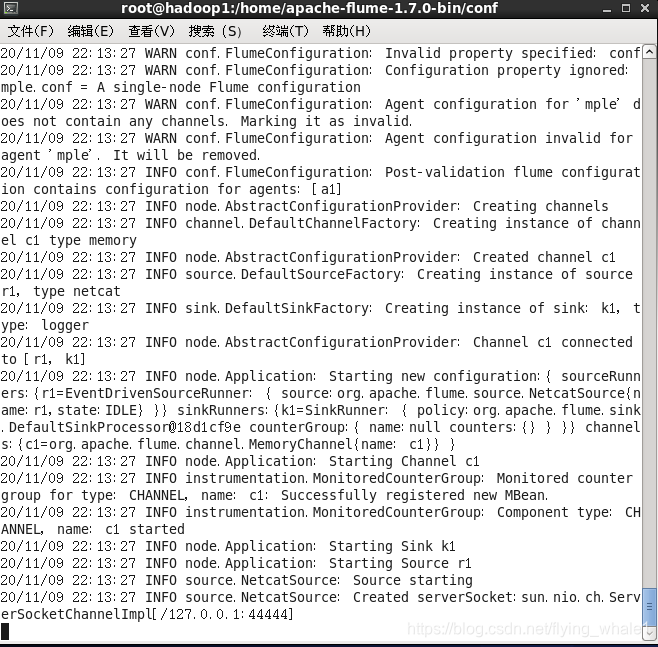

启动日志控制台

1

| flume-ng agent --conf-file example.conf --name a1 -Dflume.root.logger=INFO,console

|

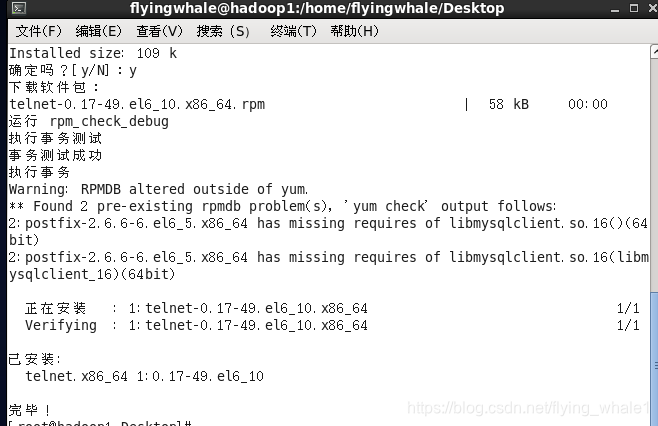

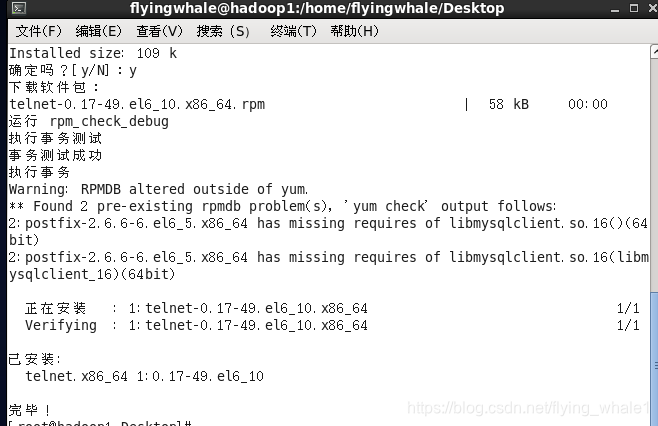

安装telnet

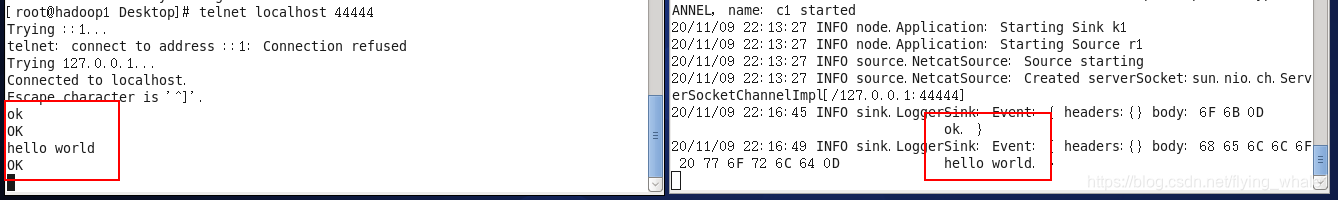

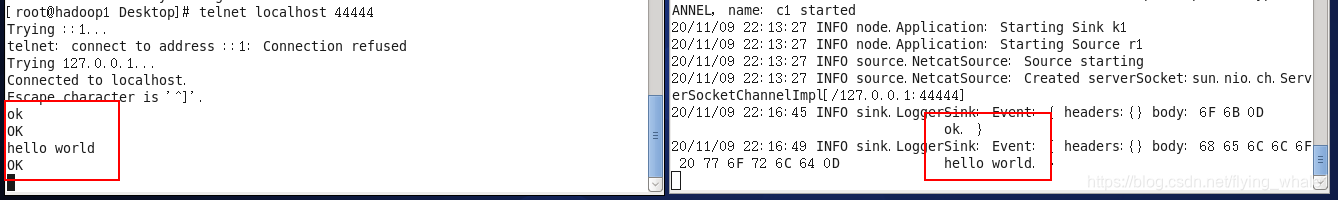

再创建一个命令窗口,测试本地44444端口是否连通

在该命令窗口下输入ok和hello world后,日志控制台窗口同步显示输入的消息

在该命令窗口下输入ok和hello world后,日志控制台窗口同步显示输入的消息

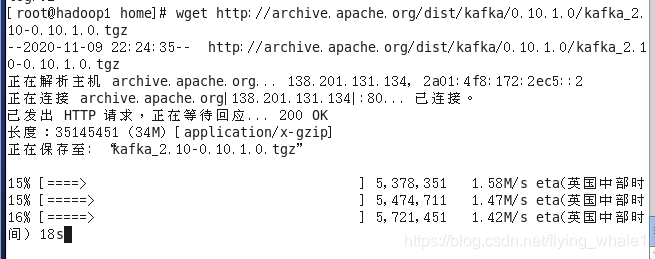

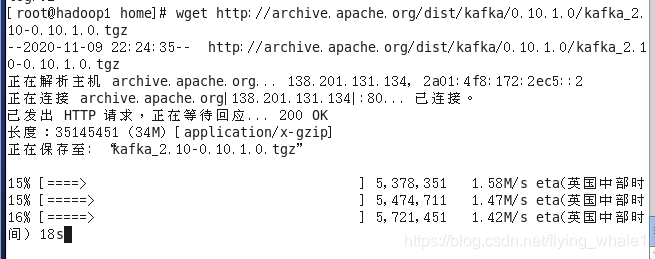

安装Kafka

进入home目录

下载Kafka0.10.1

1

| wget http://archive.apache.org/dist/kafka/0.10.1.0/kafka_2.10-0.10.1.0.tgz

|

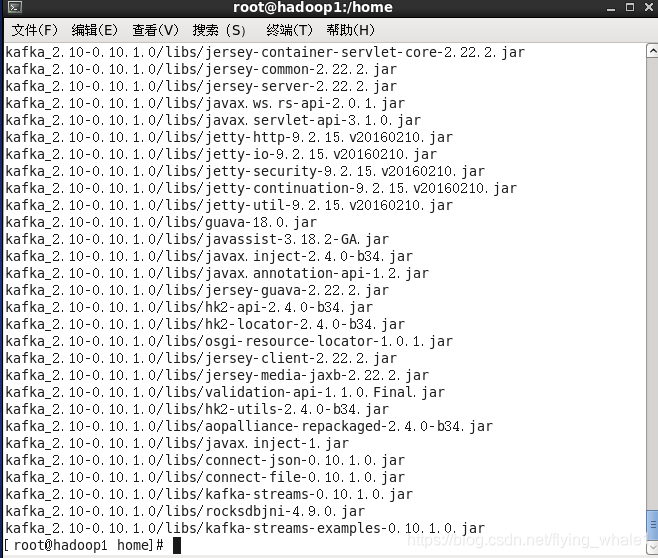

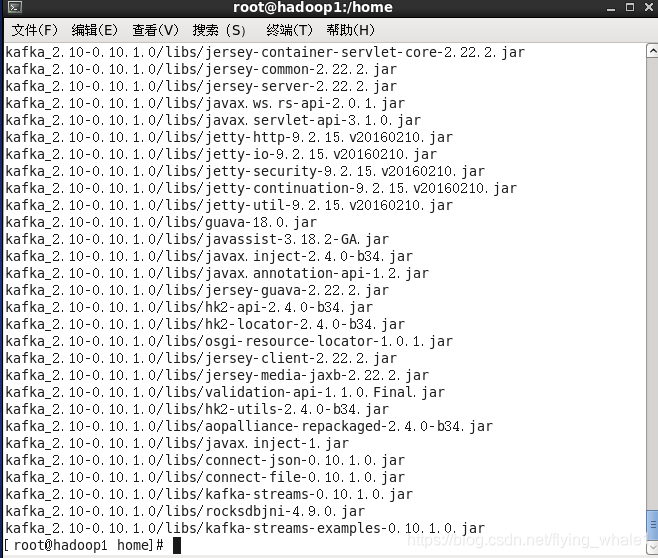

解压

1

| tar xvf kafka_2.10-0.10.1.0.tgz

|

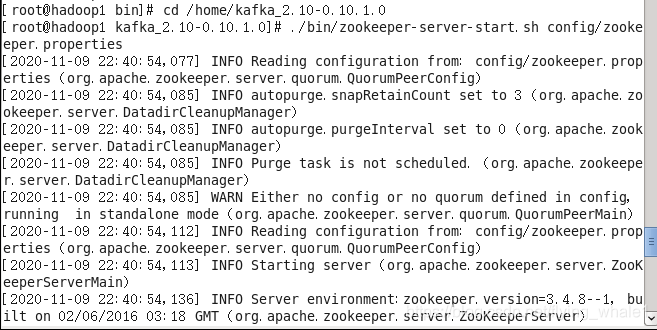

进入Kafka的安装目录

1

| cd /home/kafka_2.10-0.10.1.0/

|

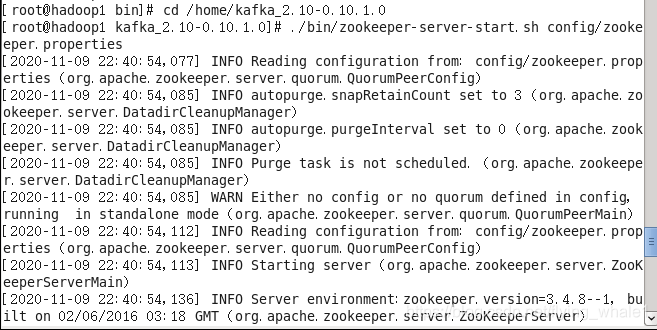

启动zookeeper

1

| ./bin/zookeeper-server-start.sh config/zookeeper.properties

|

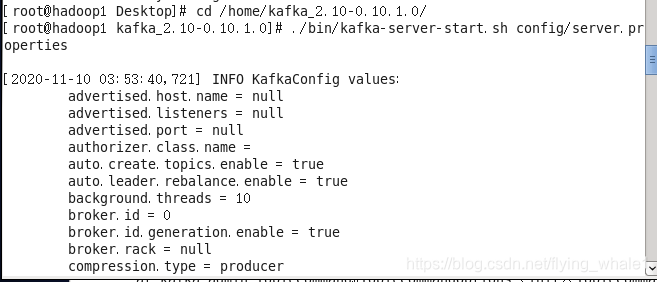

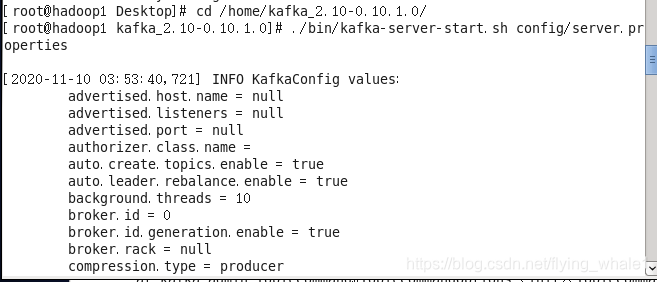

新建命令行窗口,进入Kafka的安装目录

1

| cd /home/kafka_2.10-0.10.1.0/

|

启动Kafka

1

| ./bin/kafka-server-start.sh config/server.properties

|

新建命令行窗口,进入Kafka的安装目录

1

| cd /home/kafka_2.10-0.10.1.0/

|

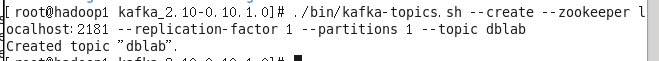

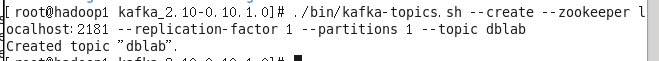

创建名为dblab的topic

1

| ./bin/kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic dblab

|

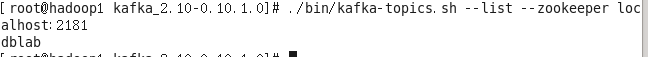

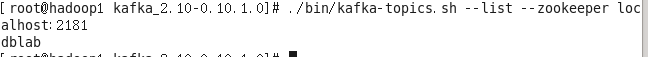

查看已创建的topic

1

| ./bin/kafka-topics.sh --list --zookeeper localhost:2181

|

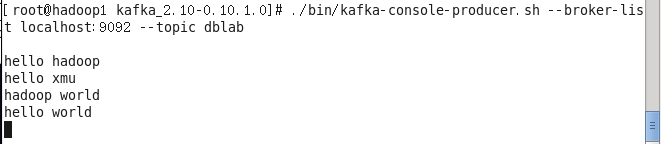

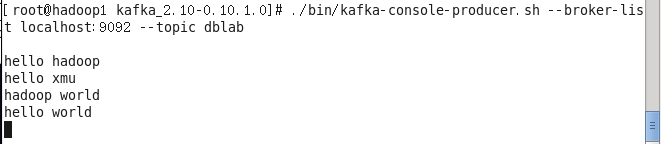

启动producer

1

| ./bin/kafka-console-producer.sh --broker-list localhost:9092 --topic dblab

|

启动后(无提示),输入信息以生产数据

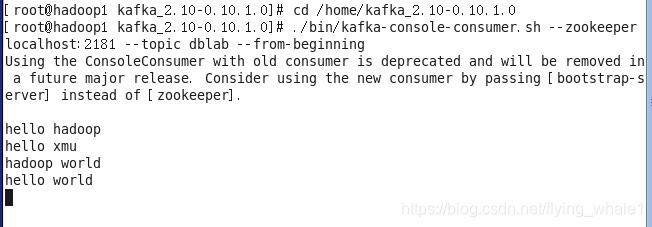

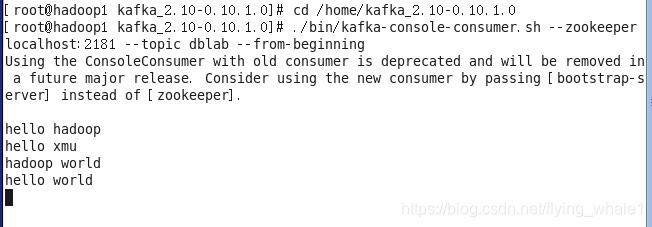

新建命令行窗口,进入Kafka的安装目录

1

| cd /home/kafka_2.10-0.10.1.0/

|

启动consumer接受数据

1

| ./bin/kafka-console-consumer.sh --zookeeper localhost:2181 --topic dblab --from-beginning

|

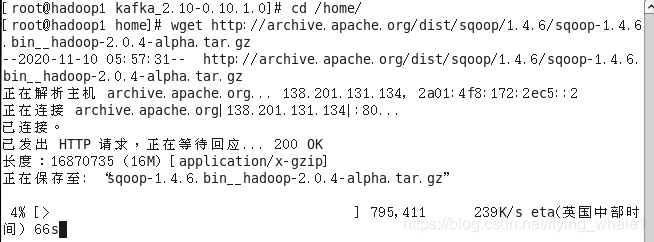

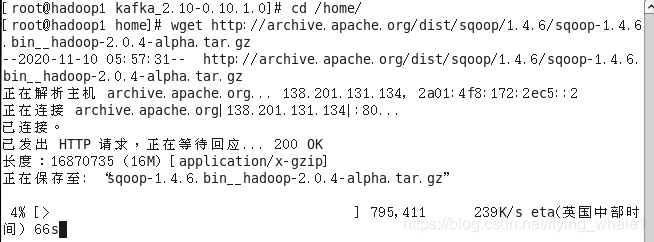

安装Sqoop

进入home目录

下载Sqoop1.4.6

1

| wget http://archive.apache.org/dist/sqoop/1.4.6/sqoop-1.4.6.bin__hadoop-2.0.4-alpha.tar.gz

|

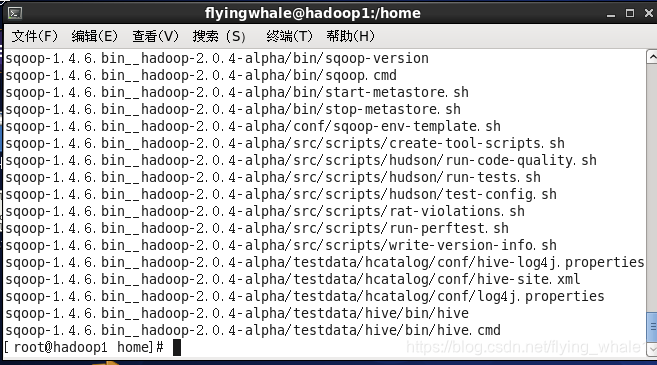

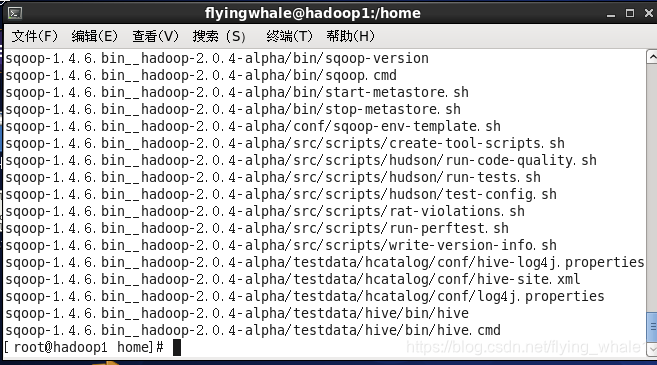

解压

1

| tar xvf sqoop-1.4.6.bin__hadoop-2.0.4-alpha.tar.gz

|

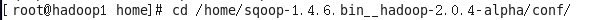

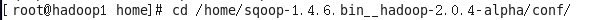

进入Sqoop配置目录

1

| cd /home/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/conf/

|

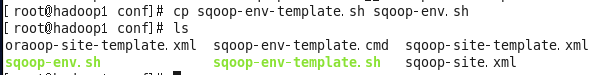

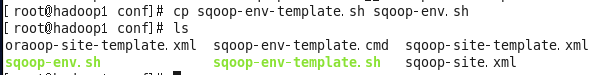

拷贝Sqoop的环境配置的模板文件

1

| cp sqoop-env-template.sh sqoop-env.sh

|

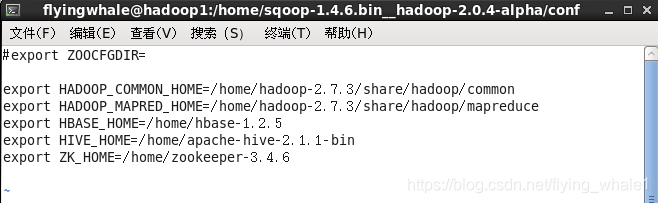

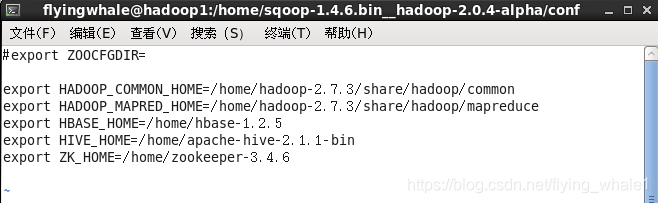

配置Sqoop环境文件

文件末尾添加

1

2

3

4

5

6

7

8

| #HADOOP_COMMON_HOME和HADOOP_MAPRED_HOME也可和HADOOP_HOME设置为相同

#HADOOP_HOME即Hadoop的安装目录,可通过find / -name hadoop-daemon.sh,得到的查找结果的上一级目录即为Hadoop的安装目录

export HADOOP_COMMON_HOME=/home/hadoop-2.7.3/share/hadoop/common

export HADOOP_MAPRED_HOME=/home/hadoop-2.7.3/share/hadoop/mapreduce

export HBASE_HOME=/home/hbase-1.2.5

export HIVE_HOME=/home/apache-hive-2.1.1-bin

#此为Zookeeper的安装目录

export ZOOKEEPER_HOME=/home/zookeeper-3.4.6

|

使配置文件生效

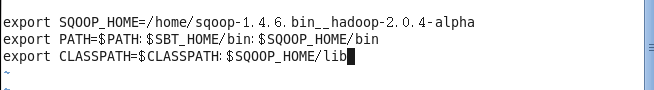

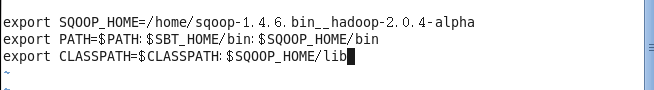

配置系统环境变量文件

文件末尾添加

1

2

3

| export SQOOP_HOME=/home/sqoop-1.4.6.bin__hadoop-2.0.4-alpha

export PATH=$PATH:$SBT_HOME/bin:$SQOOP_HOME/bin

export CLASSPATH=$CLASSPATH:$SQOOP_HOME/lib

|

使配置文件生效

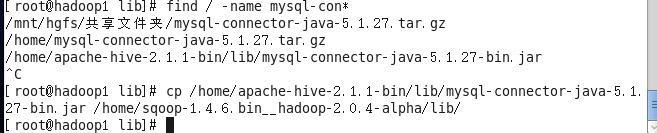

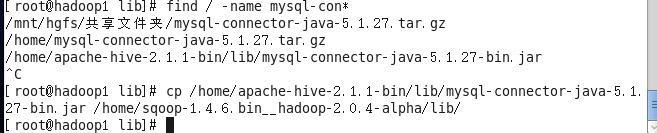

在安装MySql环境的基础下,进入Sqoop的lib目录

1

| cd /home/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/lib

|

下载mysql驱动程序

1

| weget https://repo1.maven.org/maven2/mysql/mysql-connector-java/5.1.27/mysql-connector-java-5.1.27.jar

|

(由于我有mysql驱动程序,就直接到相应目录拷贝到Sqoop的lib目录下)

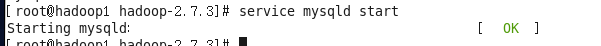

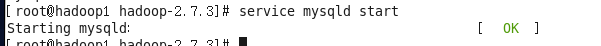

启动Mysql

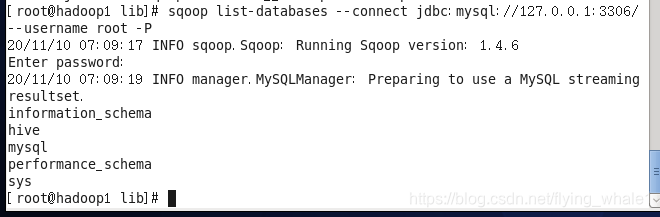

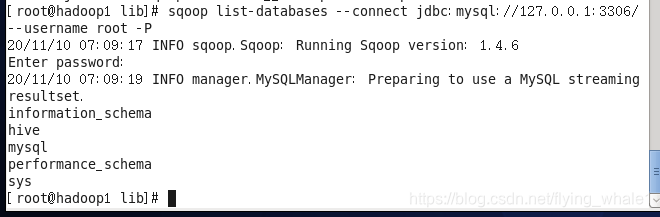

测试Sqoop与Mysql是否连接

1

| sqoop list - databases --connect jdbc:mysql://127.0.0.1:3306/ --username root -P

|

出现如下报错!

需在Sqoop_env.sh文件中配置ACCUMULO_HOME

进入Sqoop的配置目录

需在Sqoop_env.sh文件中配置ACCUMULO_HOME

进入Sqoop的配置目录

1

| cd /home/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/conf/

|

修改Sqoop_env.sh文件

文件末尾输入

1

| export ACCUMULO_HOME=/var/lib/accumulo

|

使配置文件生效

使配置文件生效

创建accumulo文件夹

1

| mkdir /var/lib/accumulo

|

继续测试

又报错

配置HCAT_HOME,同上

进入Sqoop的配置目录

配置HCAT_HOME,同上

进入Sqoop的配置目录

1

| cd /home/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/conf/

|

修改Sqoop_env.sh文件

文件末尾输入

1

| export HCAT_HOME=/var/lib/hcat

|

使配置文件生效

使配置文件生效

创建accumulo文件夹

继续测试,成功连上mysql

1

| sqoop list-databases --connect jdbc:mysql://127.0.0.1:3306/ --username root -P

|

在该命令窗口下输入ok和hello world后,日志控制台窗口同步显示输入的消息

在该命令窗口下输入ok和hello world后,日志控制台窗口同步显示输入的消息

需在Sqoop_env.sh文件中配置ACCUMULO_HOME

进入Sqoop的配置目录

需在Sqoop_env.sh文件中配置ACCUMULO_HOME

进入Sqoop的配置目录 使配置文件生效

使配置文件生效 配置HCAT_HOME,同上

进入Sqoop的配置目录

配置HCAT_HOME,同上

进入Sqoop的配置目录 使配置文件生效

使配置文件生效